ChatGPT, Artificial Intelligence, and Deskilling

why large language models (LLMs) will deskill the knowledge worker

With the recent release of ChatGPT, the unreasonable effectiveness of large language models (LLMs) in performing many tasks has become clear. LLMs can already generate output that is equivalent to a human of average skill in many domains where the output is text (e.g. articles/ad copy, code). Already, it seems that essays, or even code assignments, are obsolete as a method of examination in universities. Although this is a challenge for universities and the standard of education, we will soon face a much bigger problem. LLMs and recent developments in AI will cause a gradual deskilling of the average knowledge worker in the same way that automation in other domains has already deskilled workers in many other domains over the last century.

In an essay that I wrote a decade ago, I explained how automated systems produce deskilled human operators whose skill level never rises above that of a novice and who are ill-equipped to cope with the rare but inevitable instances when the system fails. At the beginning of my career on a trading floor, I acquired the necessary skills and knowledge to get better at my job primarily via mundane day-to-day tasks, making markets on simple products for small sizes etc. If these tasks are now performed by bots, then that does not deskill the senior trader who has already acquired the expertise to monitor the bot and detect when the bot fails. But the junior trader is left without a viable path to acquire this expertise. Because it is precisely by doing mundane, simple and somewhat repetitive tasks that most people acquire the skills to become an expert in their domain. Automation and AI deny this opportunity to the novice human operator. At a system level, the problem only becomes apparent over time as older humans who have already acquired their skills and expertise leave the workforce.

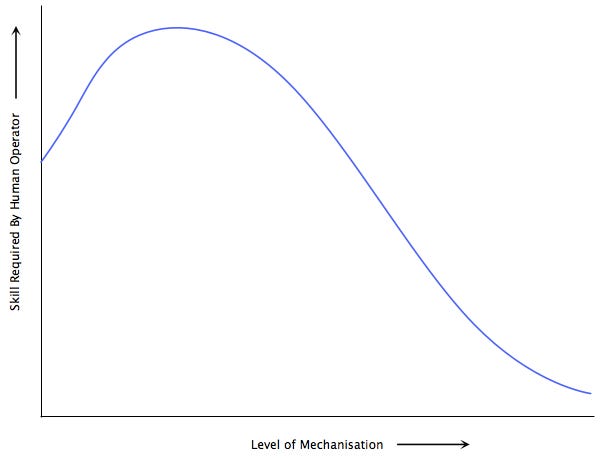

This is not a new phenomenon. The increasing automation of the manufacturing sector in the 20th century led to the progressive deskilling of the human workforce. For example, below is a simplified version of the empirical relationship between mechanisation and human skill that James Bright documented in 1958 (via Harry Braverman’s ‘Labor and Monopoly Capital’).

The advent of computerisation enabled any task that can be performed in a codified manner, i.e. any task that can be translated into an algorithm, to be automated in this manner. What has changed with the advent of LLMs and other recent developments in AI, is that machines can now perform not just codifiable/legible tasks but also the illegible tasks that are the bread and butter of the jobs that comprise the “knowledge economy”. What has already happened in quantitative domains where numbers are the output will now take place where words and images are the output.